How to use Deep Learning for Marketing?

Master Seminar co-taught with Markus Meierer

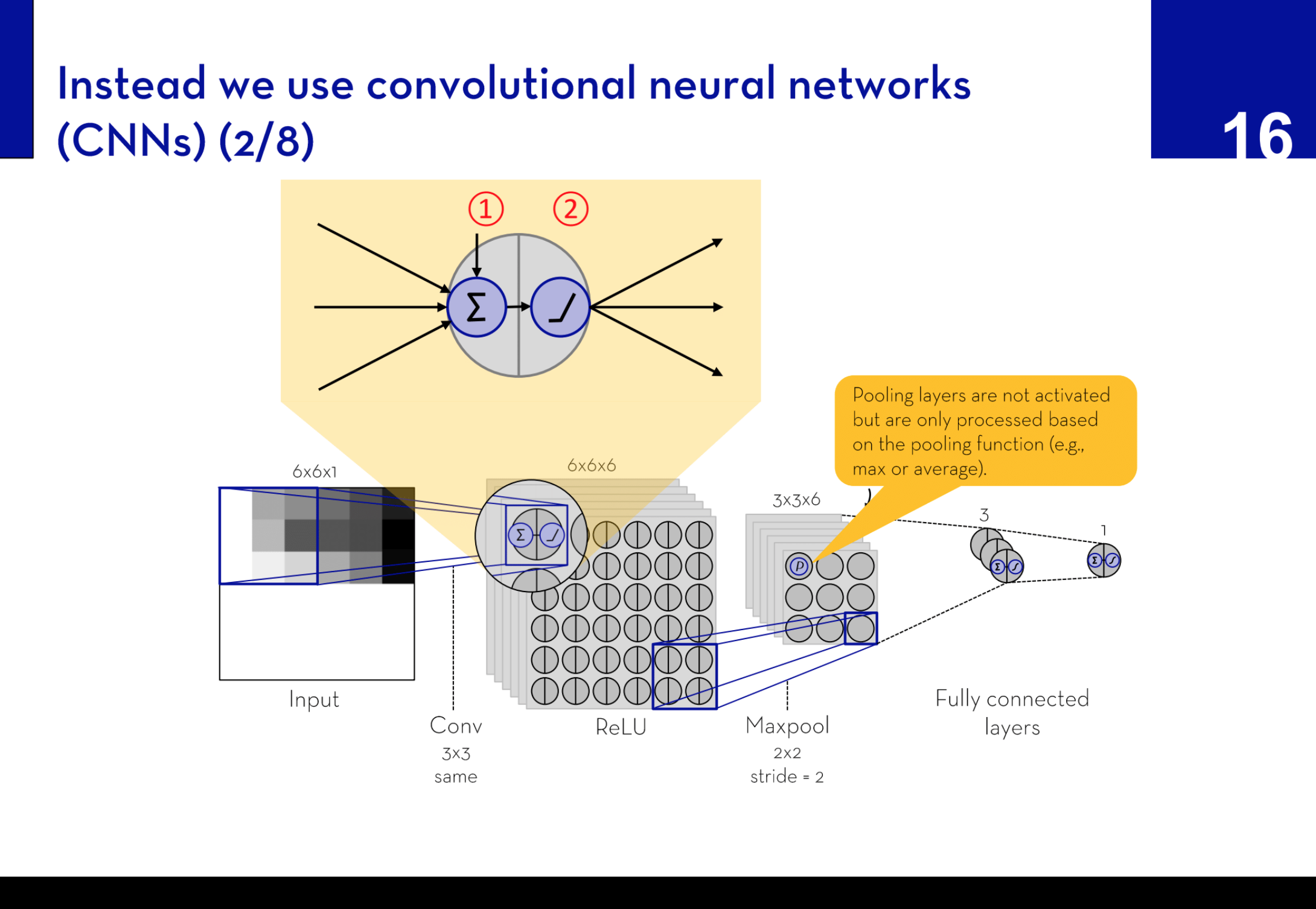

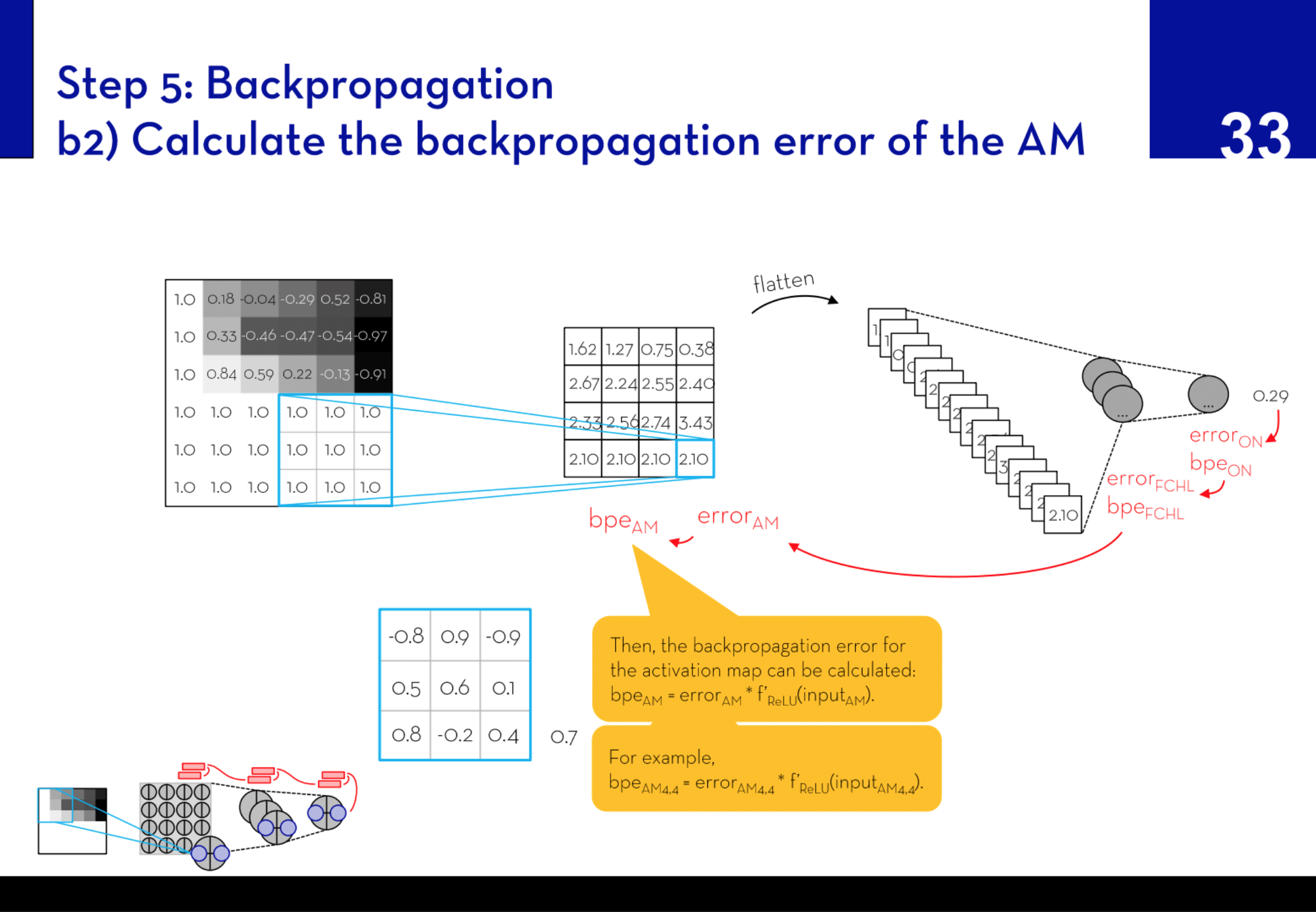

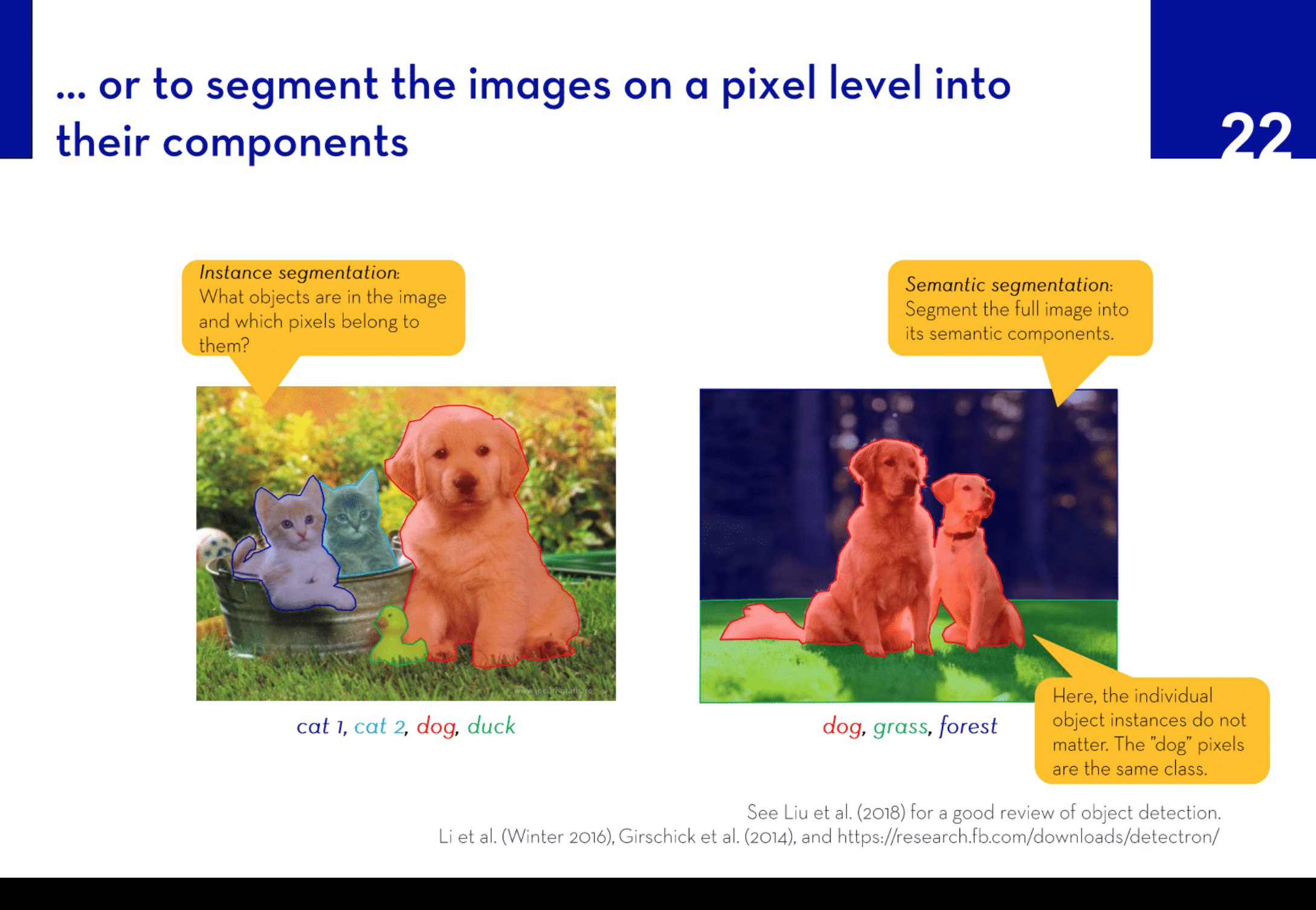

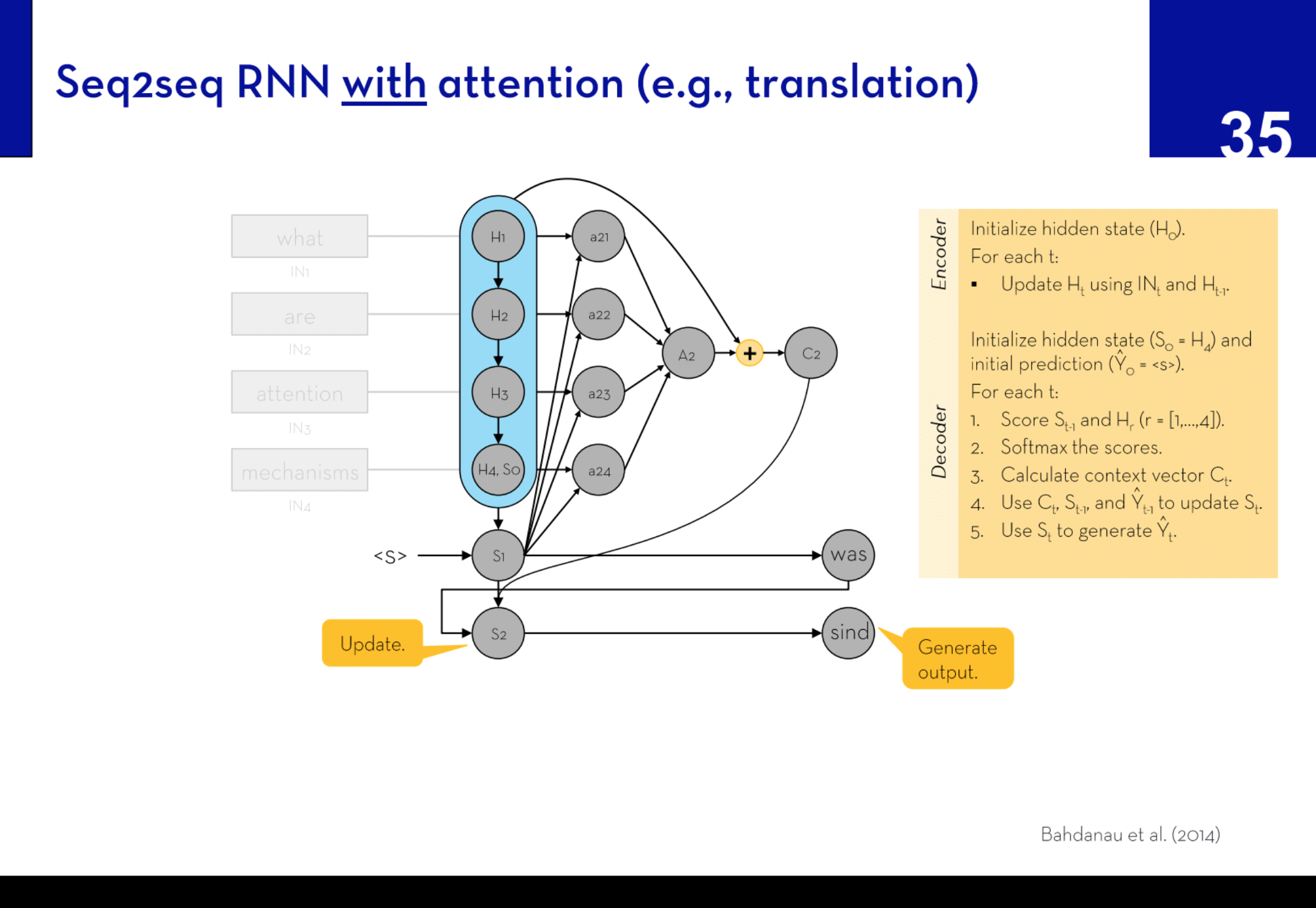

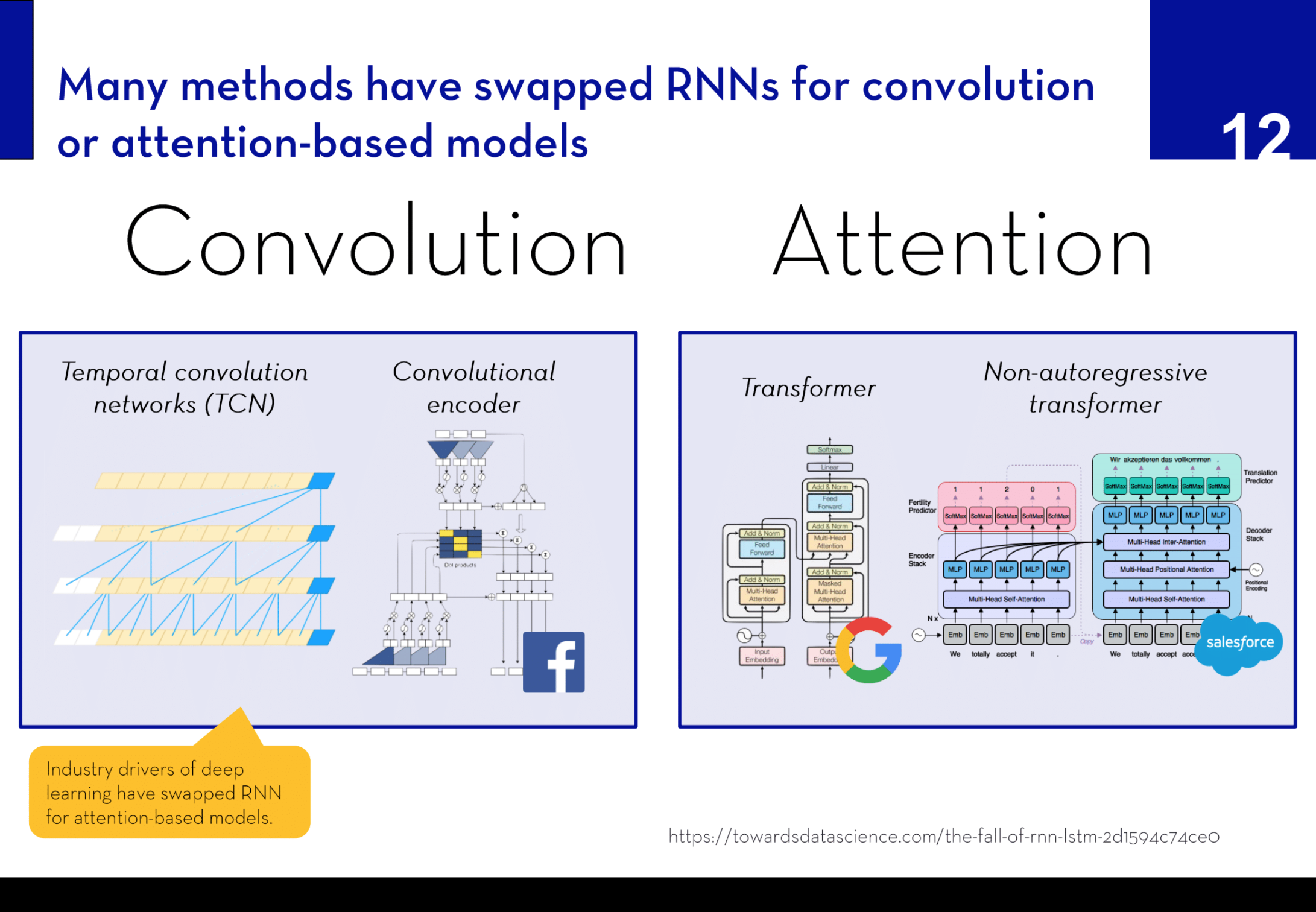

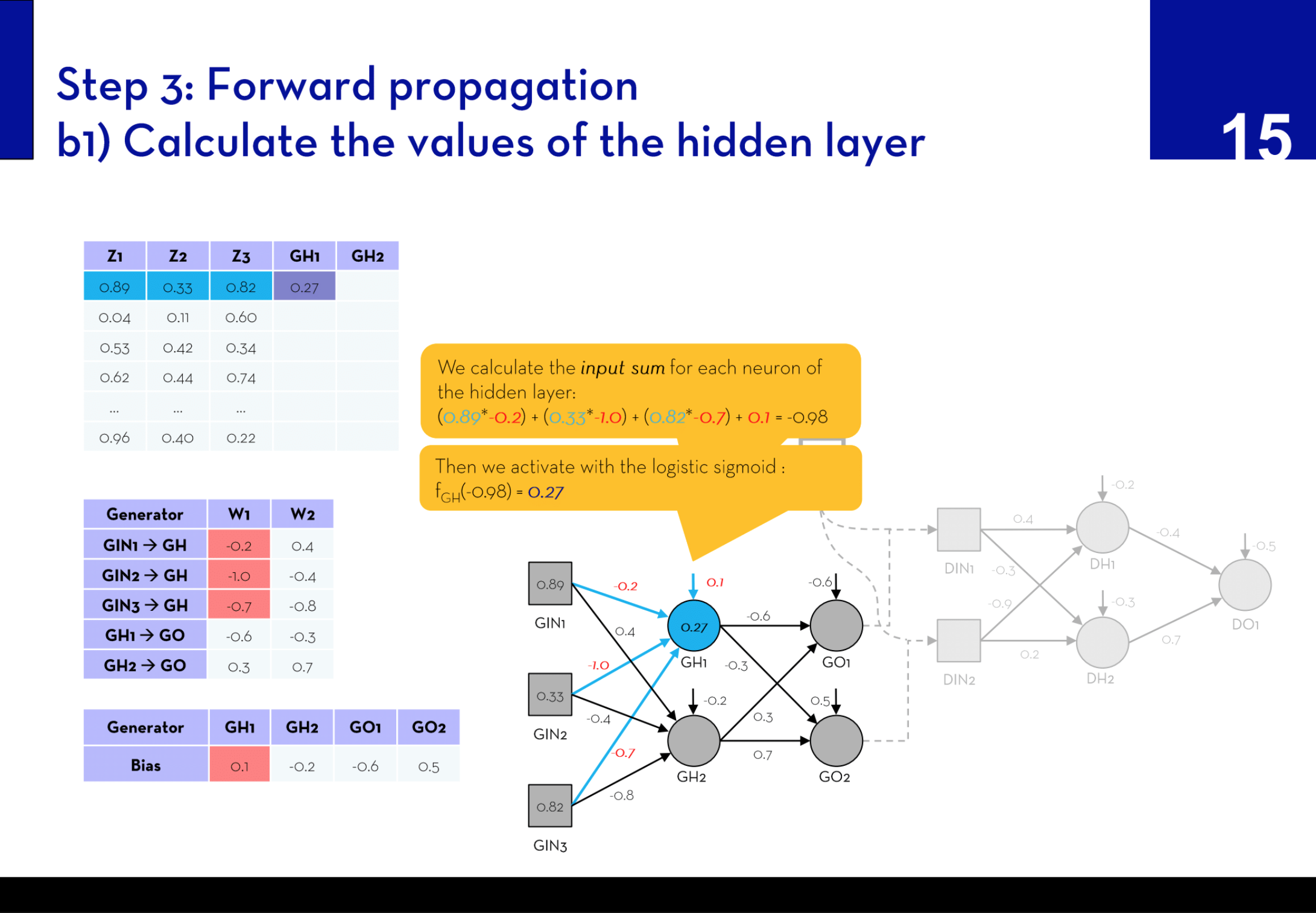

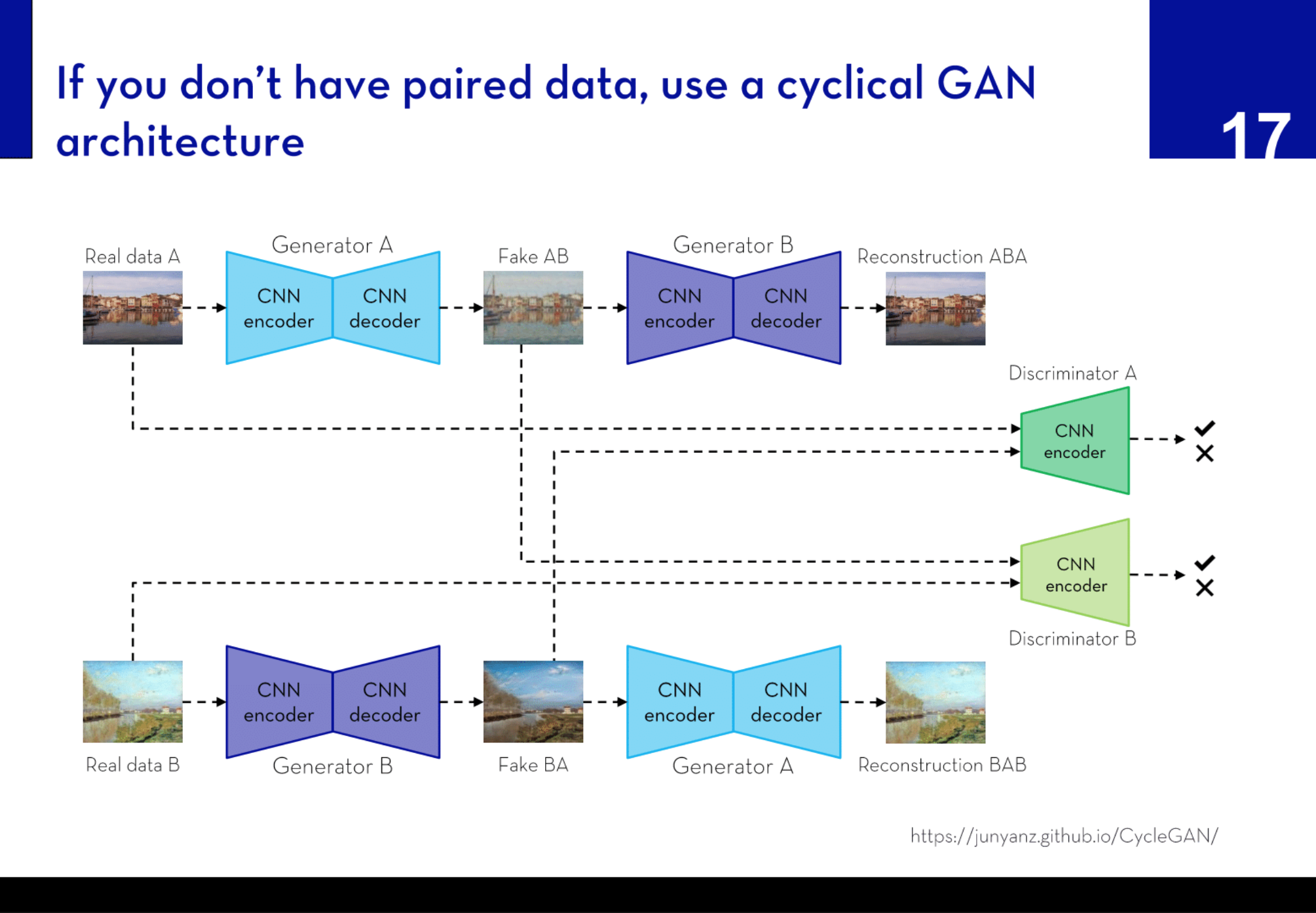

Summary: In this seminar, marketing students were introduced to the concept of deep learning. The lecture series offered an in-depth exploration of feed-forward neural networks, including multi-layer perceptrons (MLPs), convolutional neural networks (CNNs), recurrent neural networks (RNNs), and generative adversarial networks (GANs).

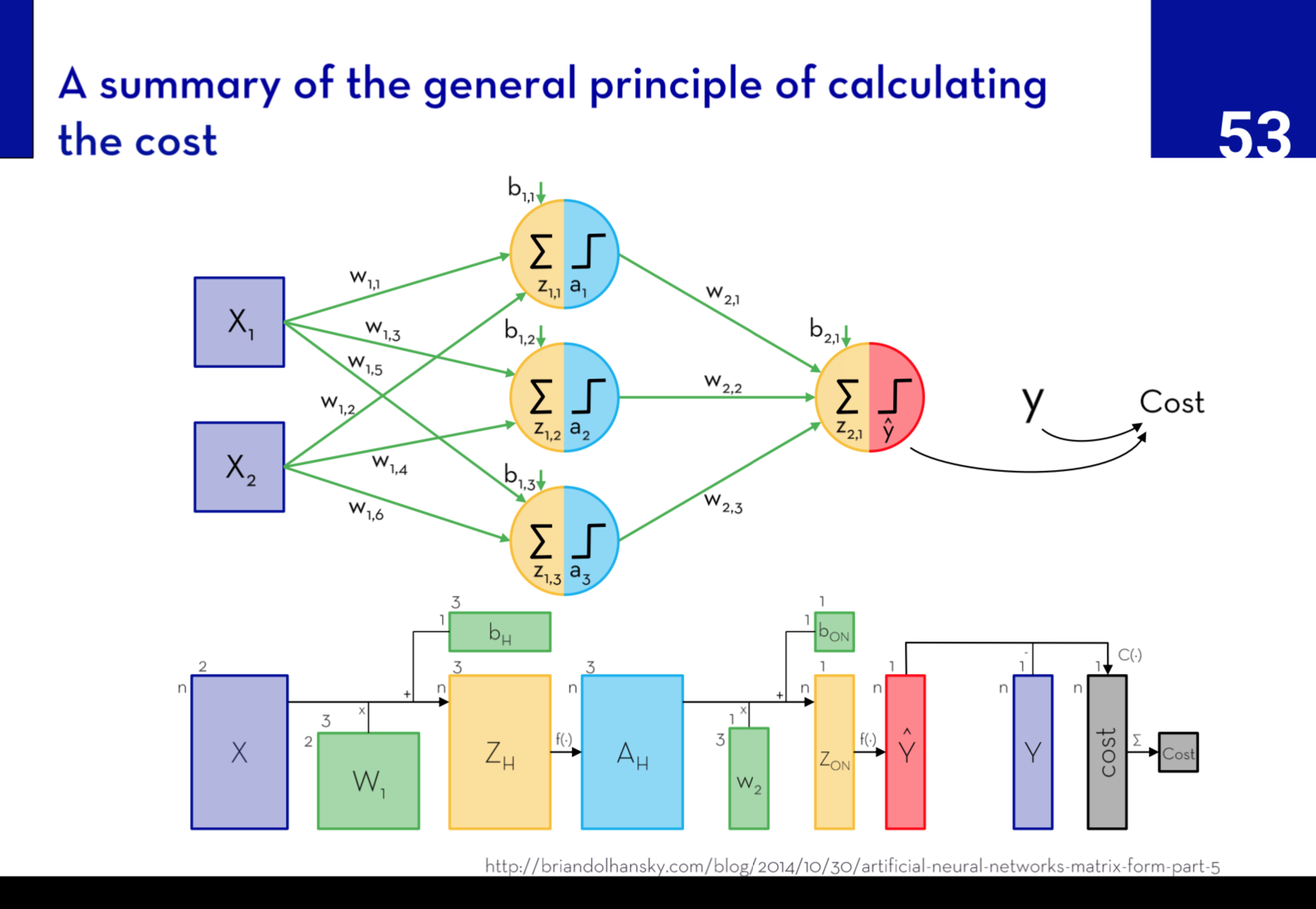

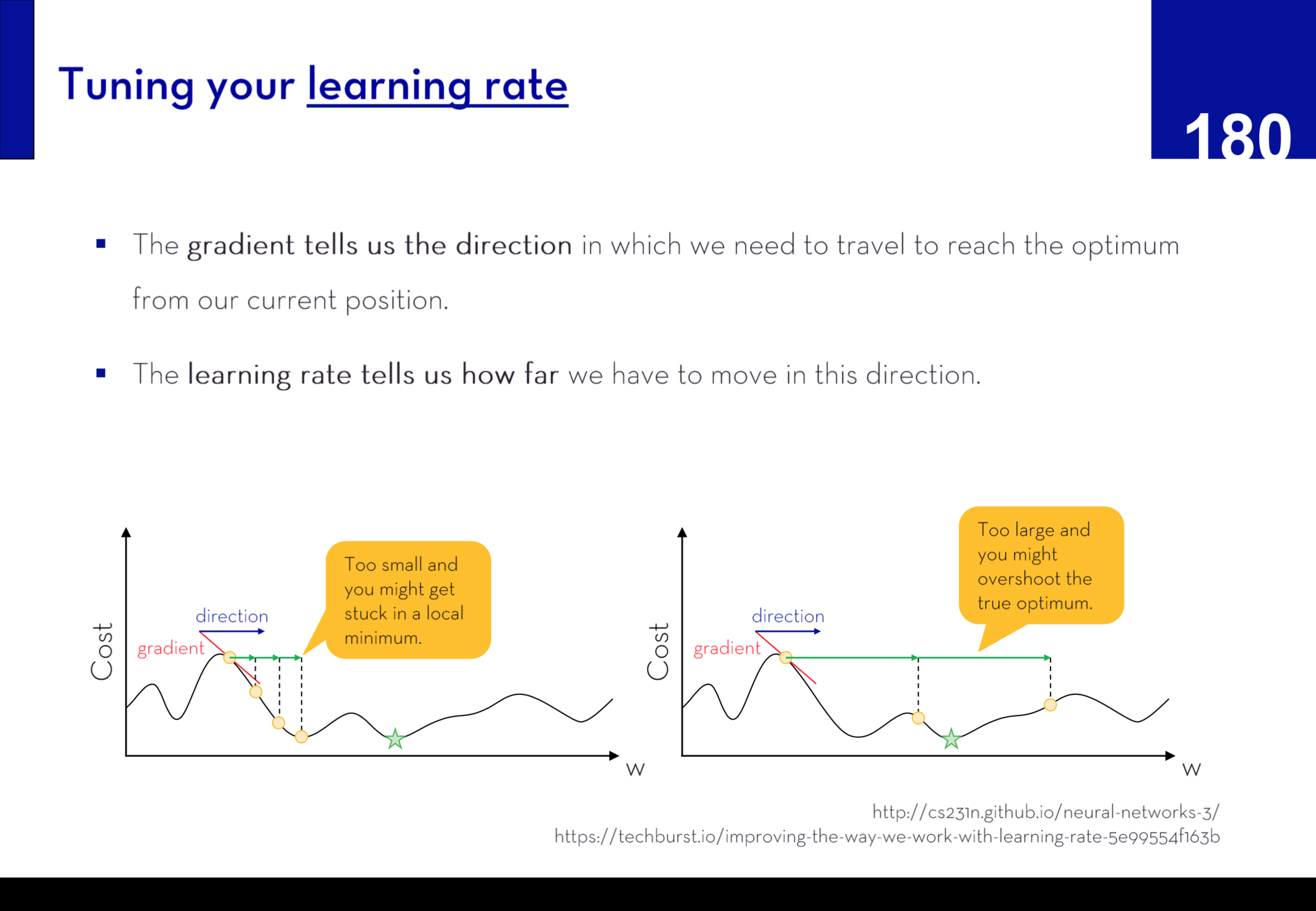

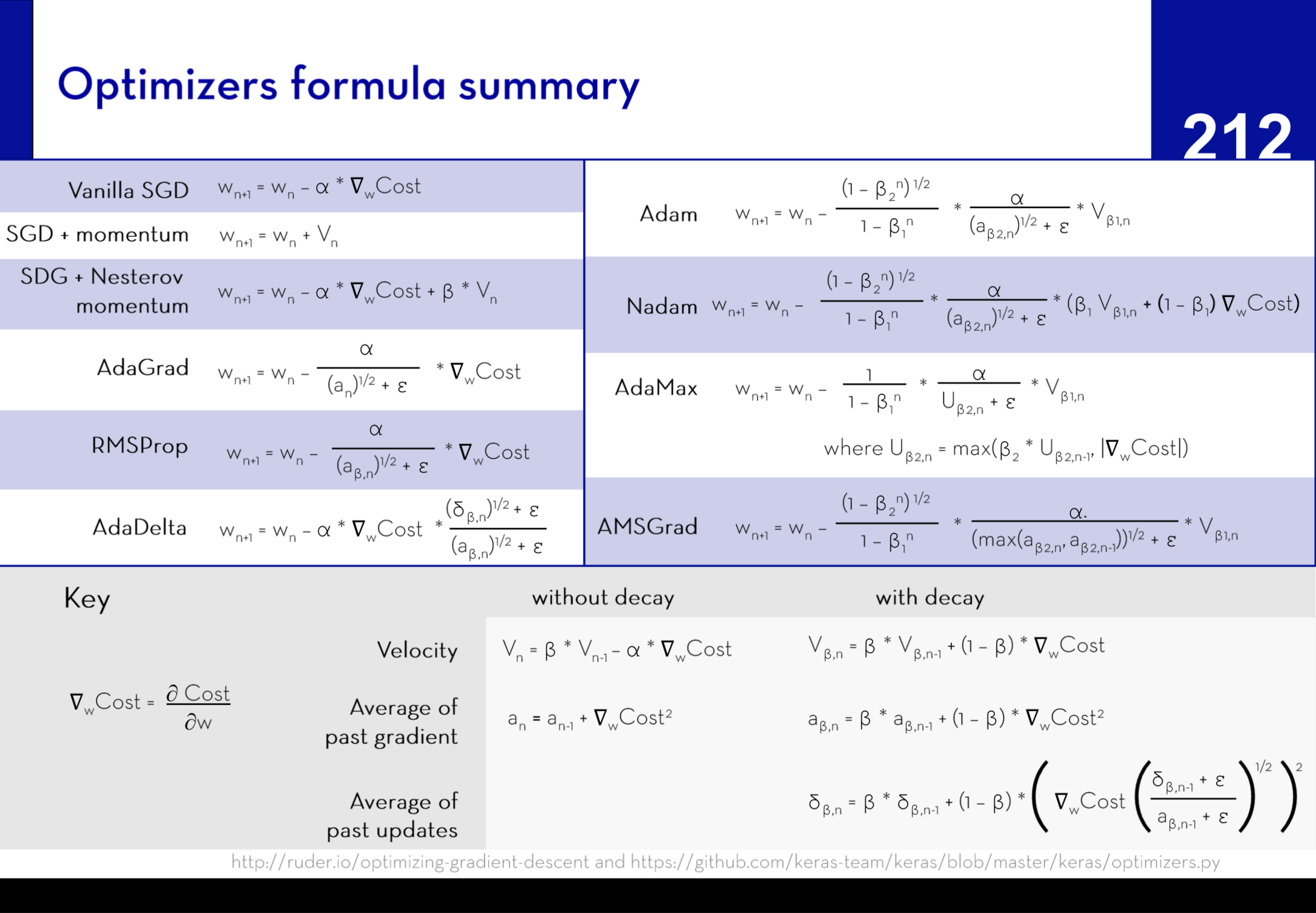

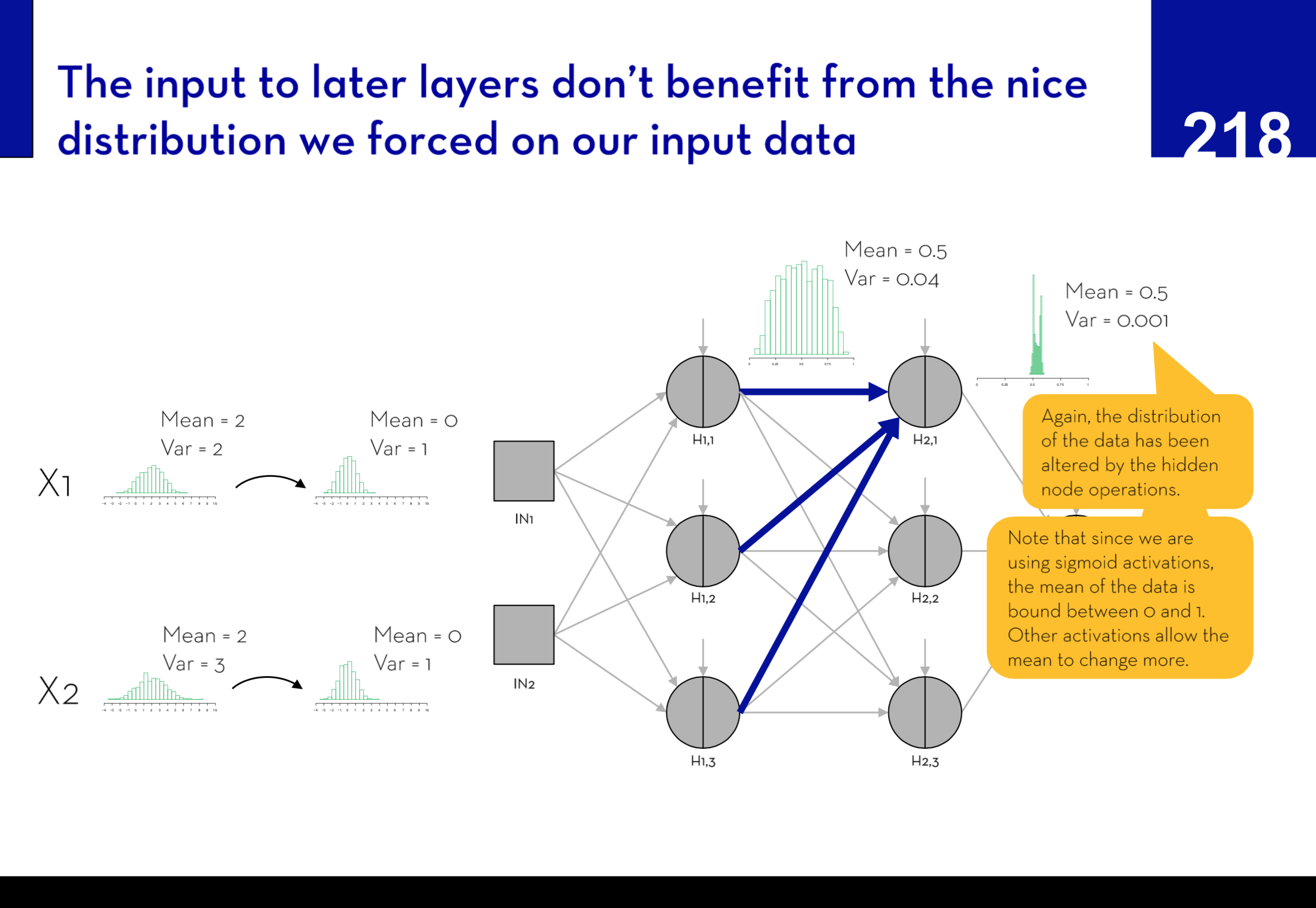

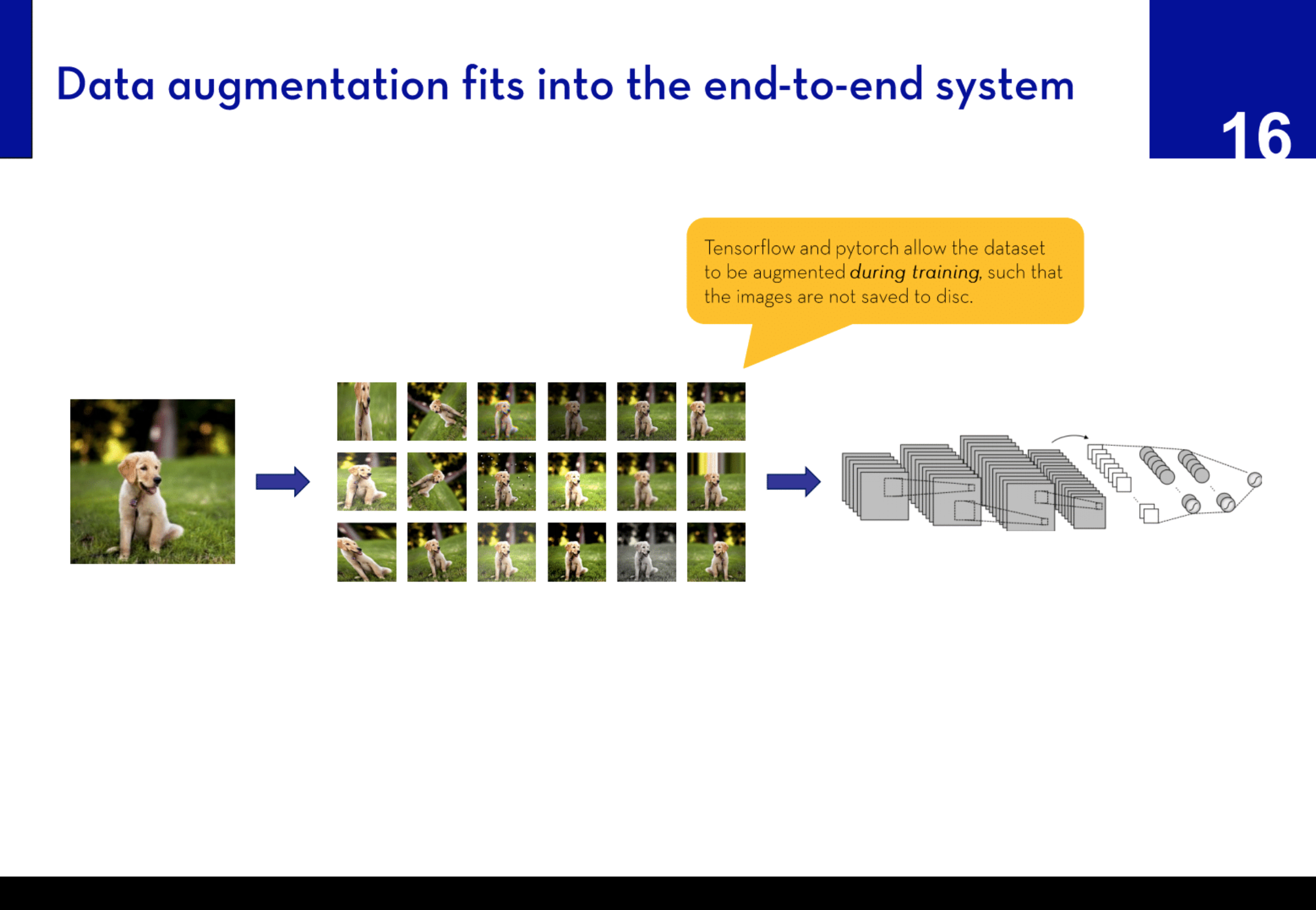

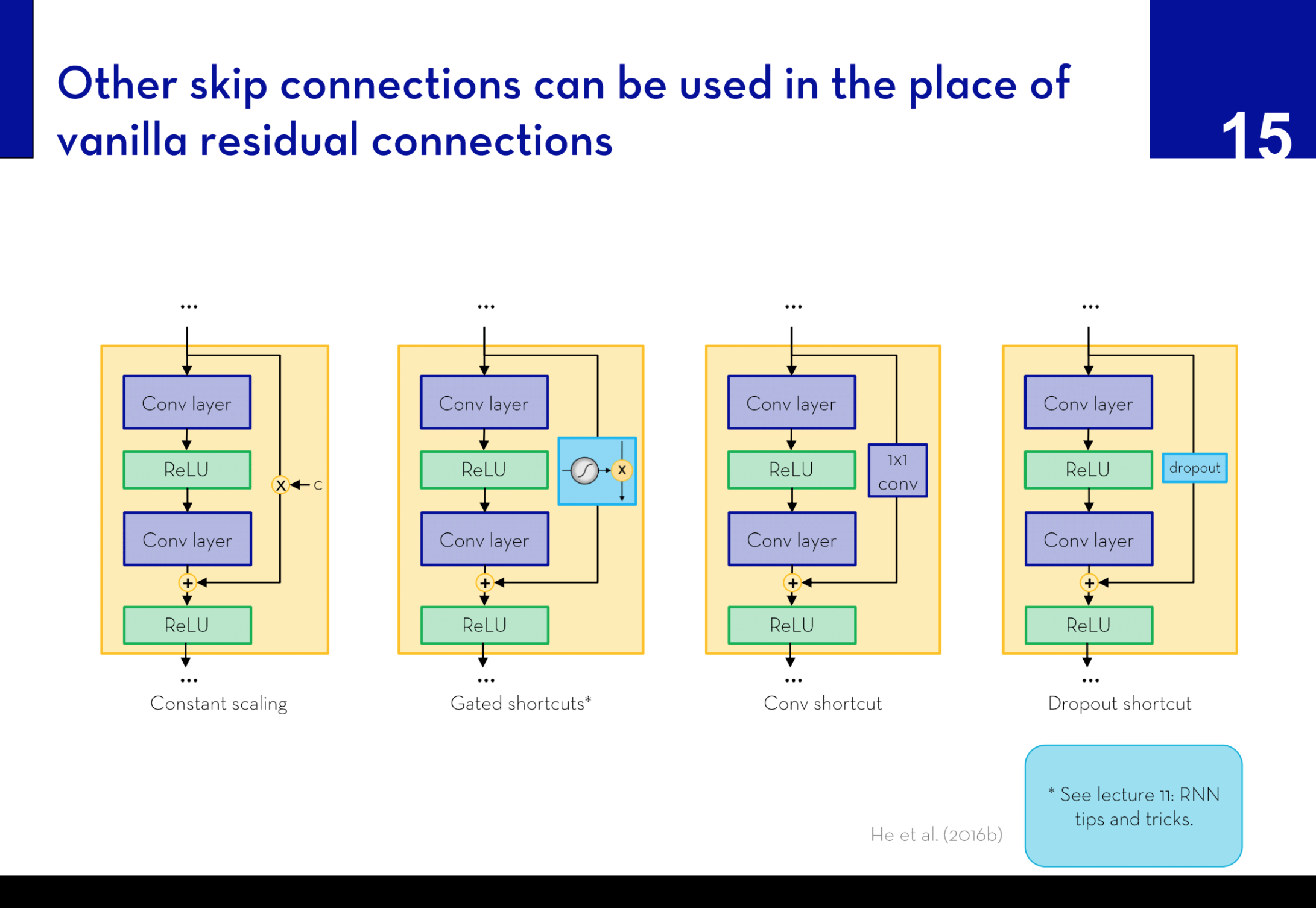

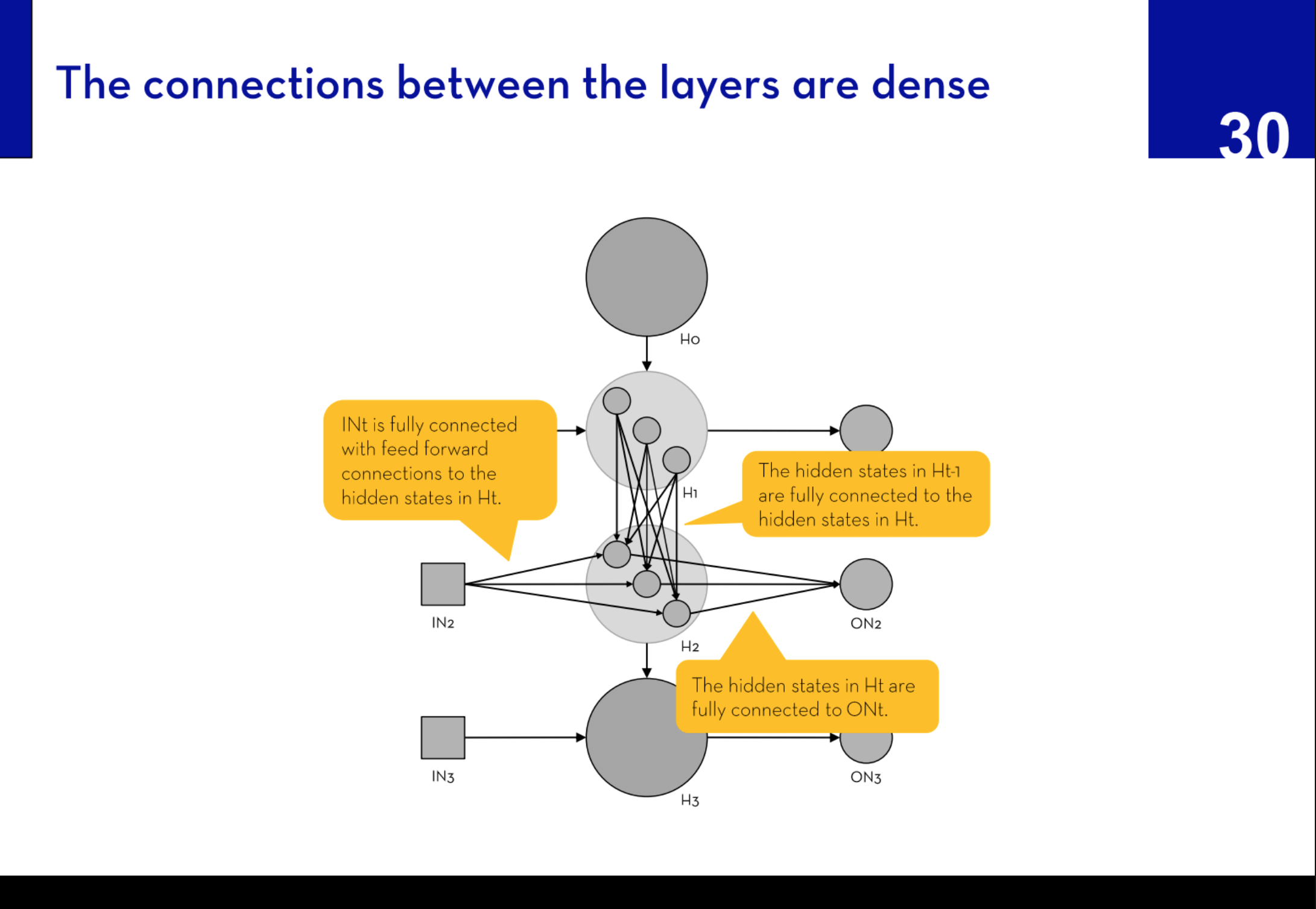

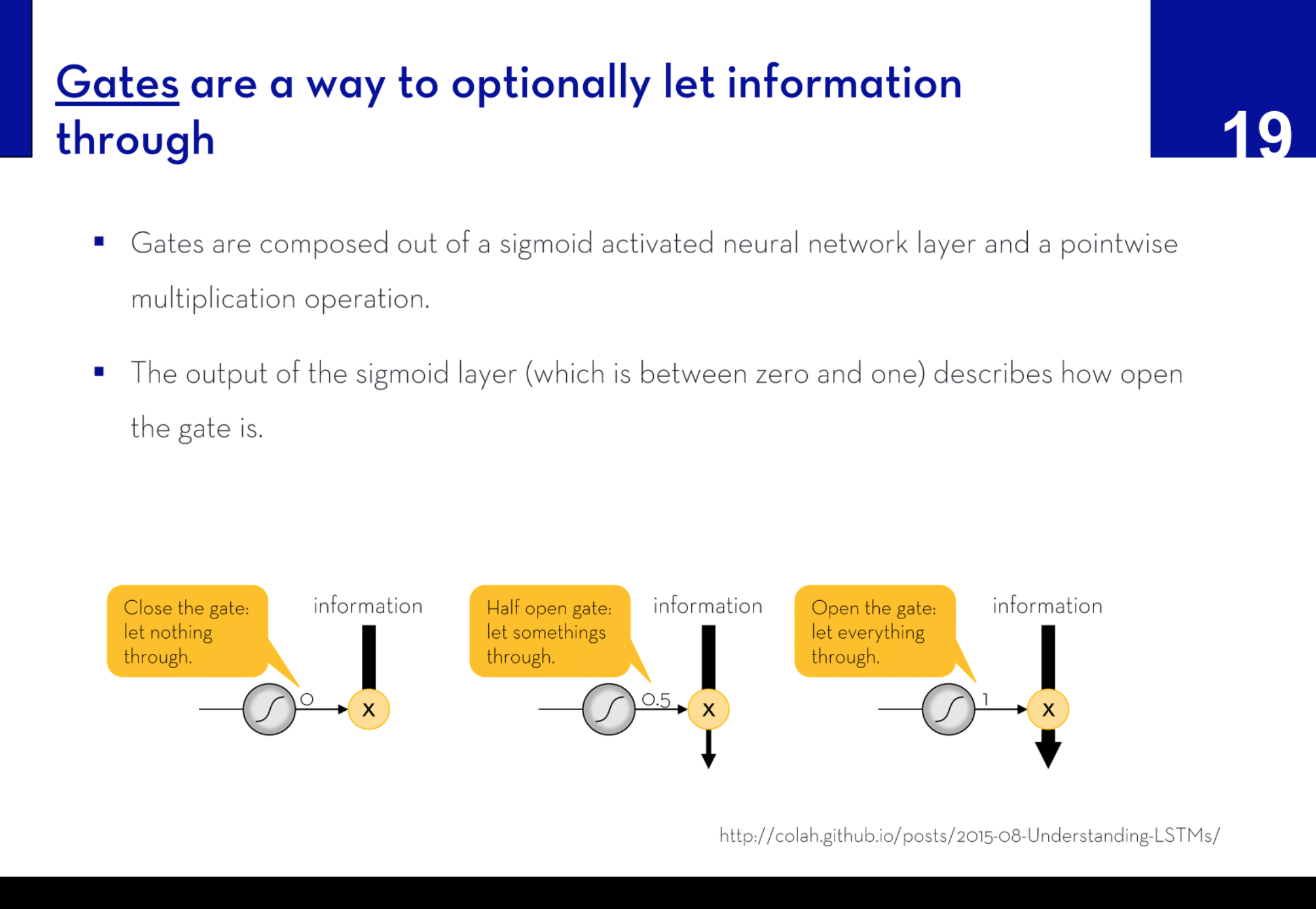

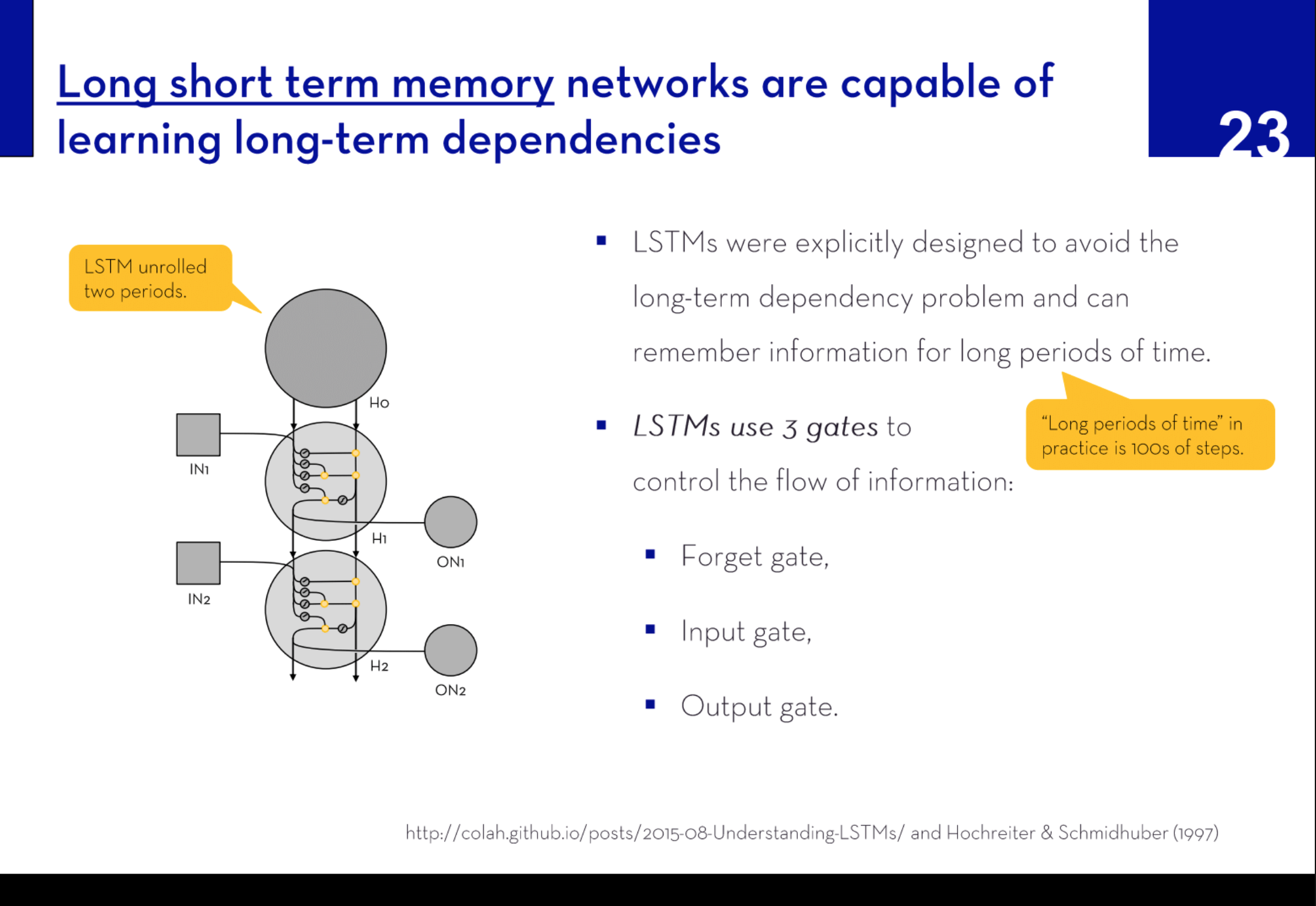

The initial sessions of each module explored the mechanics of forward and backpropagation, building upon the foundational principles established using single-layer feed-forward networks. The lectures then expanded into advanced topics covering how to optimize neural network performance. These sessions included modifying neural network architectures, exploring alternative models, and employing strategies to evaluate performance effectively. Practical tips were provided on how to achieve better convergence, reduce overfitting, and minimize memory and computational demands—key considerations in real-world applications.

The students then applied their learned skills by designing a neural network in groups, aimed at solving a specific business problem. This project-based approach not only reinforced theoretical knowledge but also enabled students to gain practical experience and develop the skills essential for applying these models in future scenarios.

Download sample lecturePreview of slides:

Machine Learning – A Non-Technical Introduction with Applications to Marketing.

Master lecture co-taught with Markus Meierer

Summary: In this lecture, students were introduced to the concept of machine learning, covering various techniques and their integration into the broader data workflow. The course covered a wide range of topics starting with the machine learning pipeline, emphasizing how it fits within the larger context of data handling and processing.

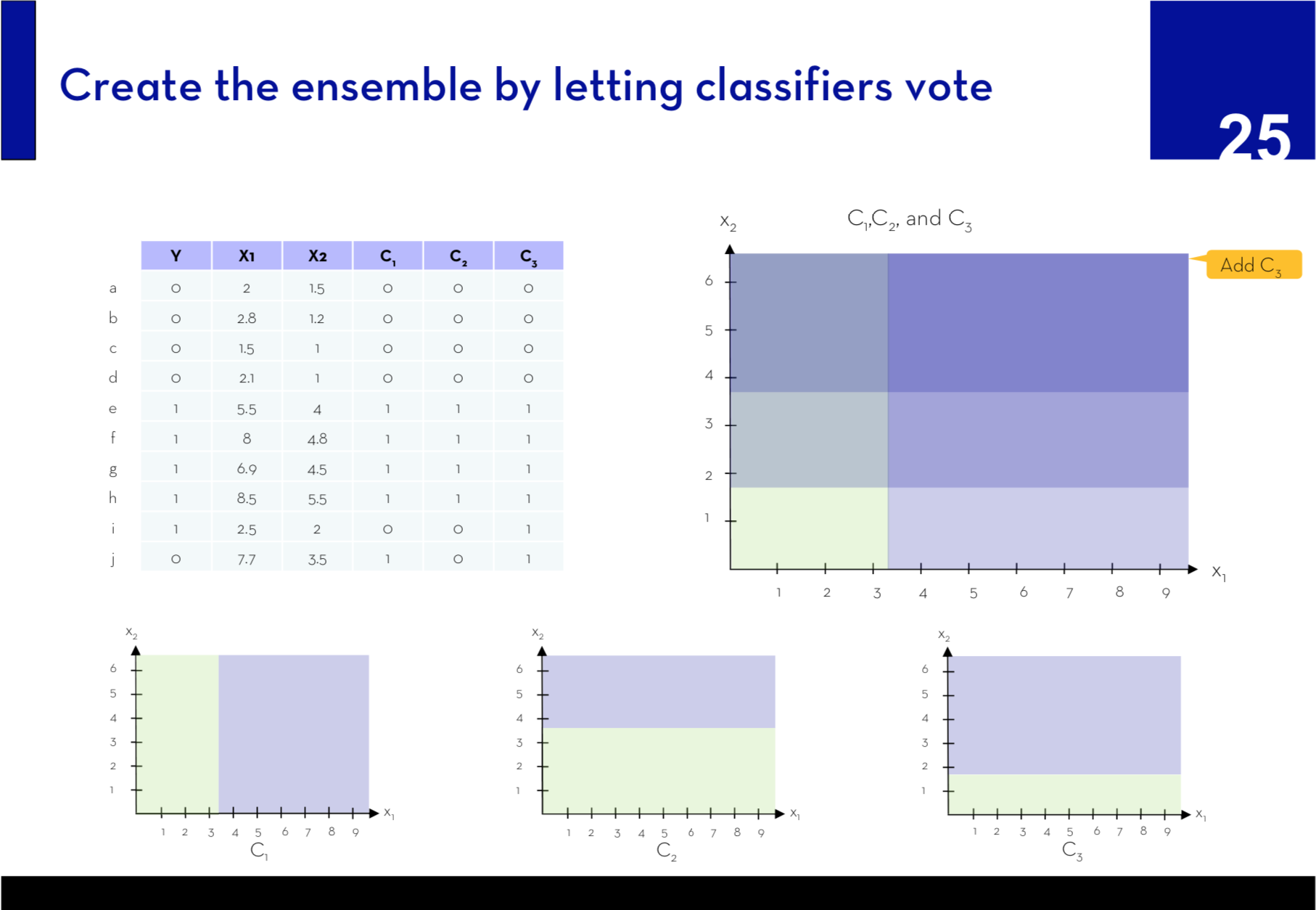

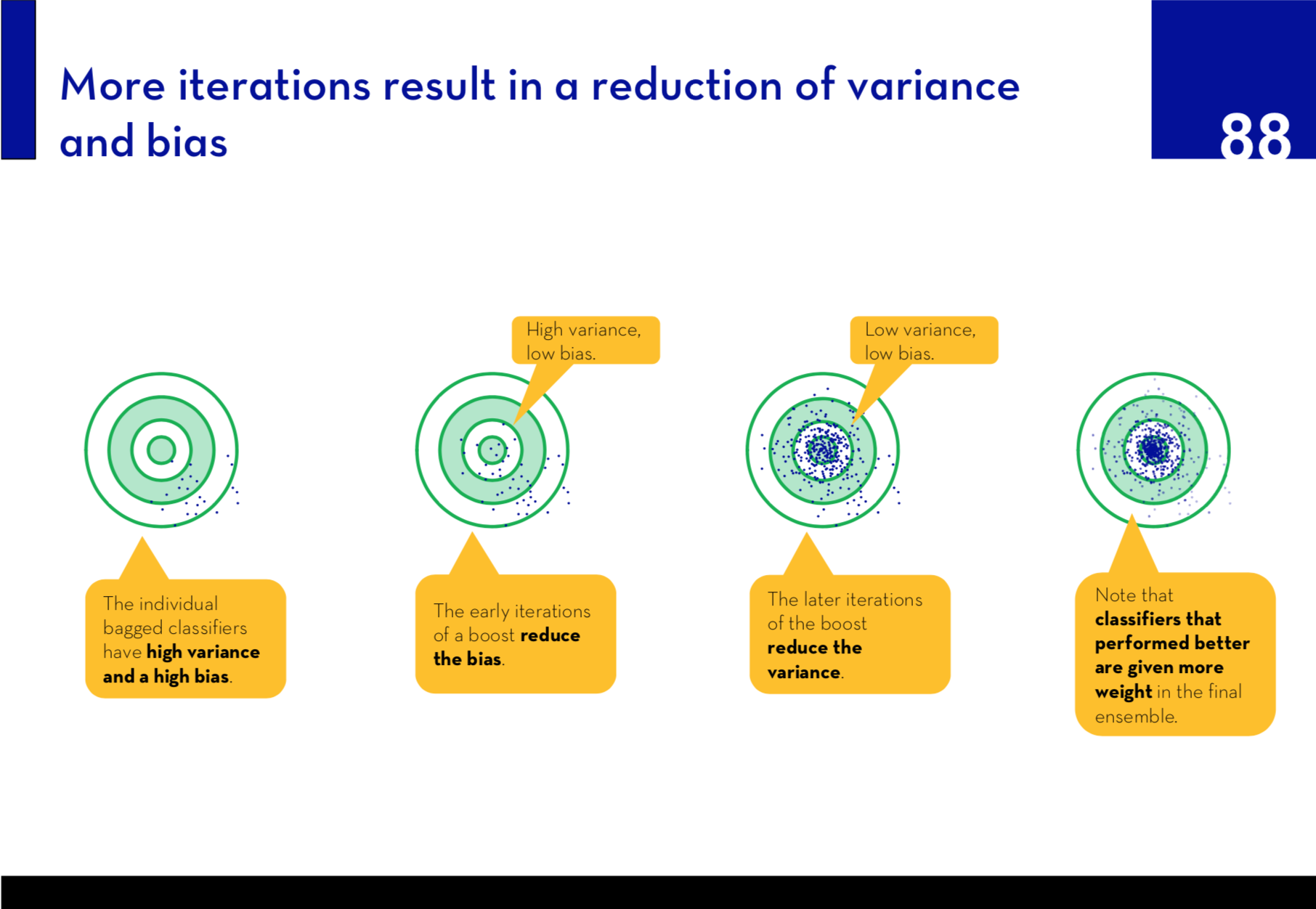

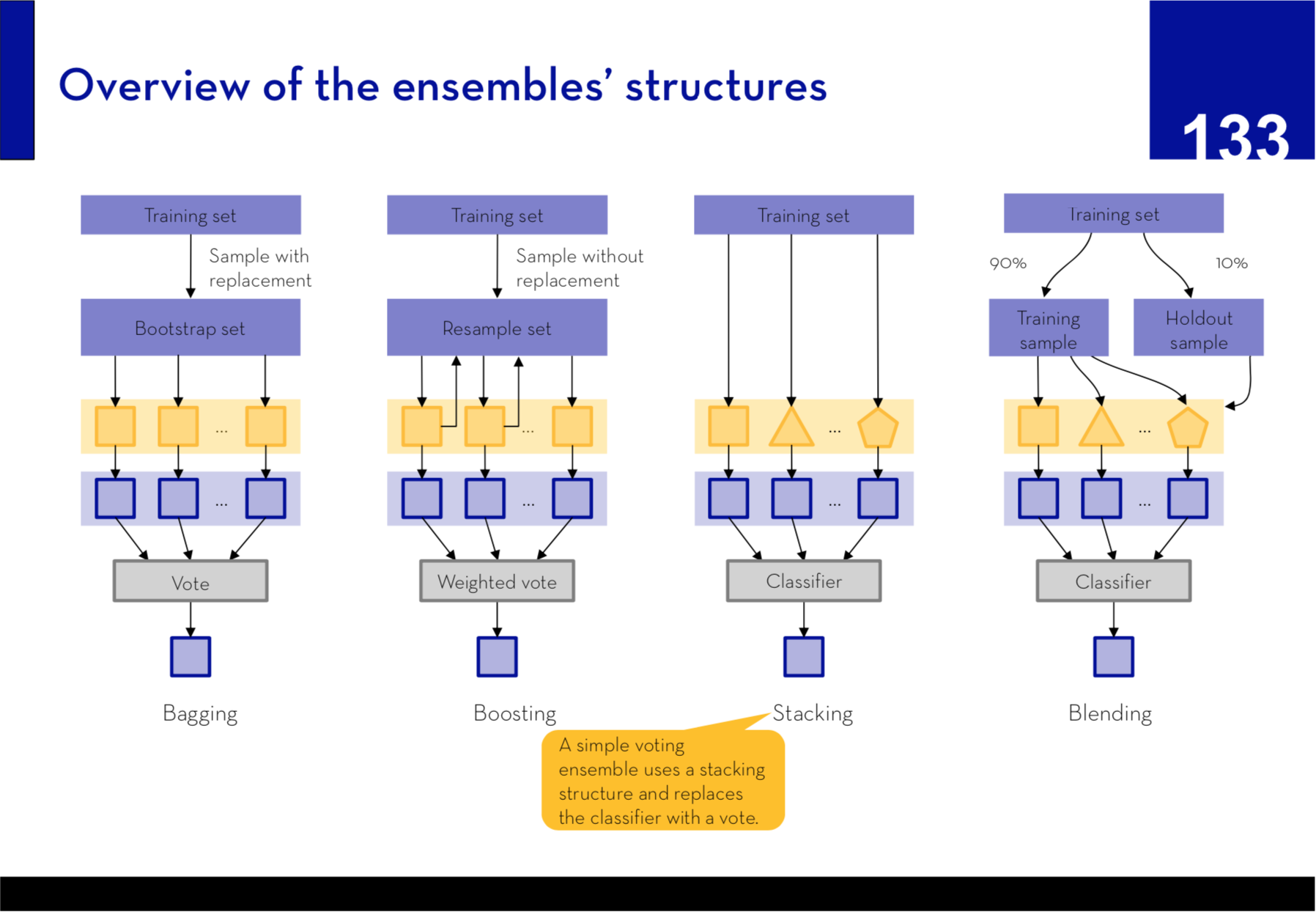

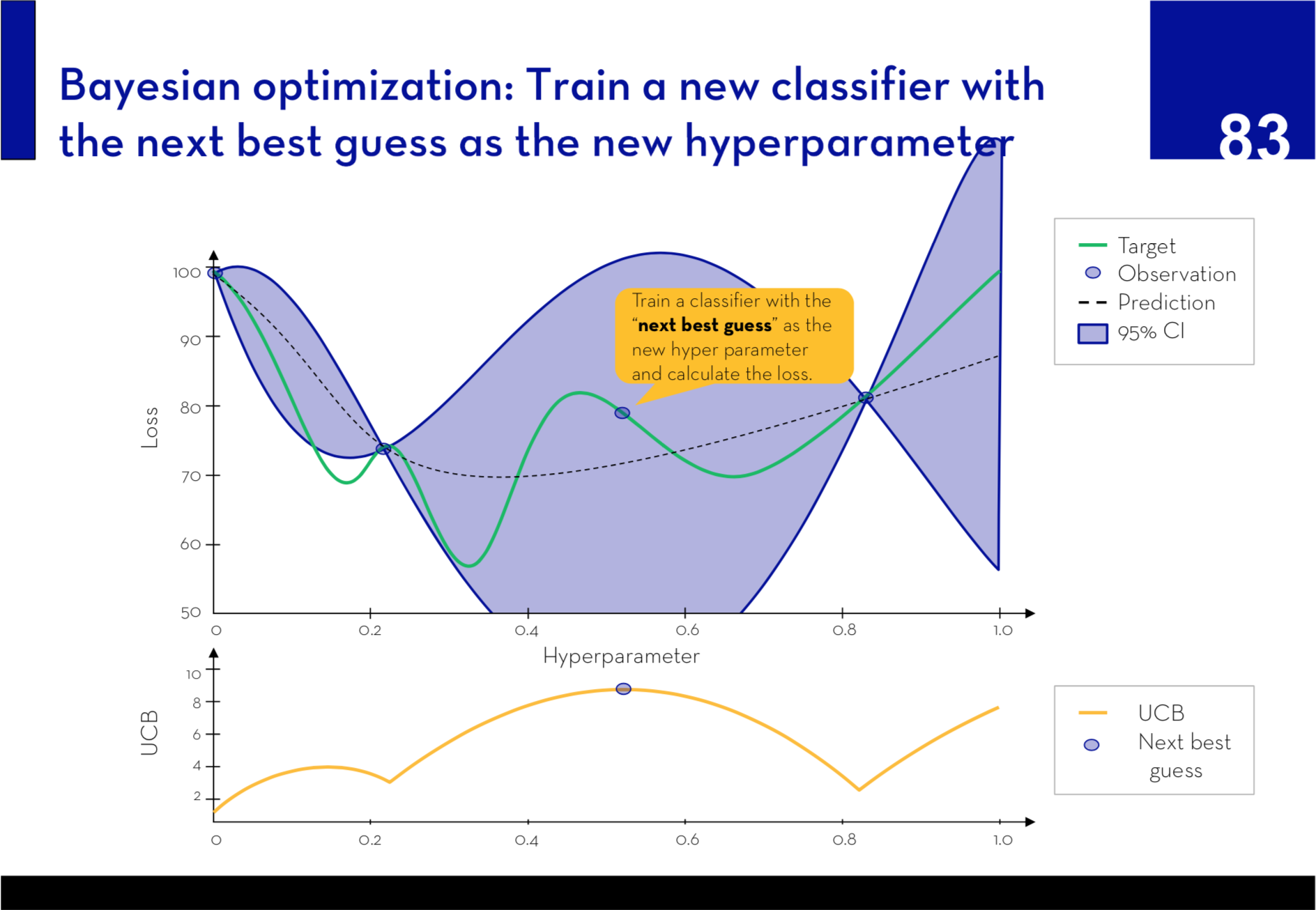

Key topics addressed in the course included k-nearest neighbors (KNNs), logistic regression, lasso and ridge regularization, support vector machines (SVMs), random forests, neural networks, along with advanced techniques like bagging, boosting, and ensembles. A distinctive feature of our approach involved breaking down each method to its core components. Students uses over-simplified toy datasets to train each model, which significantly enhanced their understanding of the underlying mechanisms and how to fine-tune model parameters.

Further, the course explored the critical aspects of model evaluation, discussing the trade-offs between bias and variance, which are crucial for developing robust machine learning models. Additionally, we introduced practical strategies for deploying trained machine learning models, providing students with a well-rounded foundation to not only understand but also implement machine learning solutions effectively.

Download sample lecturePreview of select slides: